One key observation that helped launch the field of behavioral economics into stardom is called probability weighting: a human cognitive bias to assign higher probabilities to extreme events than … well, than what? Than what someone else thinks the probabilities should be. Below, I will present a very simple mechanistic explanation, most of all for the iconic probability weighting figure in (Tversky and Kahneman, 1992). The result is a now familiar theme: (behavioral) economics expresses a more or less robust observation in psychological terms, as a persistent cognitive error. Ergodicity economics explains the same observation mechanistically, and as perfectly rational behavior.

1 Definitions and key observation

I won’t go into how probability weighting is established empirically. Instead, I’ll jump into its definition and then mention some caveats.

Probability weighting: People tend to treat extreme events as though they had higher probabilities than they actually do, and (necessarily because of normalization) common events as if they had lower probabilities than they actually do.

Before going any further: what probabilities are, and even whether they have any actual physical meaning at all, is disputed. They are certainly not directly observable, we can’t touch, taste, smell, see, or hear them. Maybe it’s uncontroversial to say they are parameters in models of ignorance. Consequently, when we make statements about weighting, or misperceiving, probabilities we will always be on shaky ground.

To keep the discussion as concrete as possible, let’s use a specific notion of probability.

Temporal frequentist probability: The probability of an event is the relative amount of time in which the event occurs, in a long time series.

For example, we could say “the probability of a traffic light being green is 40%.” Of course we don’t have to describe traffic lights probabilistically if we know or control their algorithms. But you can imagine situations where we have no such knowledge or control. If we were to say “the probability of rain falling somewhere in London between 3pm and 4pm on a given day in May is 10%” — we would mean that we’d looked at a long time series of days in Mays from the past and found that in 10% of the periods from 3pm to 4pm it had rained somewhere in London.

I’ve said what I mean by probability weighting, and what I mean by probability. Two more bits of nomenclature.

- I will refer to an experimenter, or scientist, or observer, as a Disinterested Observer (DO); and to a test subject, or observed person as a Decision Maker (DM). The DO is not directly affected by the DM’s decisions, but the DM is, of course.

- I will refer to the probability the DO uses (and possibly controls) in his model by the word “probability,” expressed as a probability density function (PDF), p(x); and to the probabilities that best describe the DM’s decisions by the term “decision weights,” expressed as a PDF, [/katex] w(x)[/katex].

Probability weighting, neatly summarized by Barberis (2013) can be expressed as a mapping of probabilities p into decision weights w, a simple function w(p). We could look at these functions directly, but in the literature it’s more common to look at cumulative density functions (CDFs) instead. So we’ll look at the CDF for p(x), which is F_p(x)=\int_{-\infty}^{x} p(s) ds and the CDF for w(x), which is F_w(x)=\int_{-\infty}^{x} w(s) ds.

Fig. 1 is copied from Tversky and Kahneman (1992): an inverse-S curve describes the mapping between the cumulatives.

2 Mechanistic models that generate these observations

Let’s list some mechanistic models that predict this behavior. The key common feature is that the DM’s model will have extra uncertainty, beyond what the DO accounts for in his model. Behavioral economics assumes that the DO knows “the truth,” and the DM is cognitively biased and cannot see this truth. We will be agnostic: the DO and DM have different models. Whether one, both, or neither is “correct” is irrelevant for explaining the observation in Fig.1 We’re just looking for good reasons for the difference.

2.1 DM estimates probabilities

How can a DM know the probability of anything? In the real world, the only way to find the probability as we have defined it — relative frequency in time — is to look at time series and count: how often was the traffic light green? How often did it rain between 3pm and 4pm in London in May etc.

The result is a count: in n=68 out of N=680 observations the event occurred. The best estimate for the probability of the event is then 68/680=10\%. But we know a little more than that: counts of events are usually modeled as Poisson processes — it’s the null model that assumes no correlation between events, a common baseline. In this null model, the uncertainty in a count goes as \sqrt{n}.

A DO faced with these statistics is quite likely to put into his model the most likely value, 10\%. A DM, on the other hand, is likely to take into account the uncertainty in the count in a conservative way. It’s not good to be caught off guard, so let’s assume the DM adds to all probabilities one standard error, so that

Eq. 1 w(x)=\frac{1}{c}\left[p(x)+\sqrt{p(x)}\right],

where c ensures normalization, c=\int_{-\infty}^{+\infty} \left[ p(x) + \sqrt{p(x)}\right] dx.

From here it’s just handle-cranking.

- specify the DO’s model, p(x)

- specify the DM’s model, w(x)

- integrate to find latex F_p(x) and latex F_w(x)

- plot F_w vs. F_p

Fig.2 shows what happens for a Gaussian distribution and for a fat-tailed Student-t.

Generally, probability weighting is a mismatch between the models of the DO and the DM. The canonical inverse-S shape represents the precautionary principle: it’s best for the DM to err on the side of caution, whereas the DO will often use most likely probabilities.

2.2 DO confuses ensemble-average and time-average growth

Incidentally, neglecting the detrimental effects of fluctuations (i.e. neglecting the precautionary principle) is one direct consequence of the ergodicity problem in economics: a DO who models people as expectation-value optimizers rather than time-average growth optimizers will find the same type of “probability weighting,” which should really just be seen as an empirical falsification of the DO’s model. The prevalence of these curves could therefore be interpreted as evidence for the importance of adopting ergodicity economics. See also this blog post by Ihor Kendiukhov.

2.3 DM assumes a broader range of outcomes

Recognizing probability weighting as a simple mismatch between the model of the DO and the model of the DM predicts all sorts of probability weighting curves. Now we know what they mean, we can make predictions and test them. Fig.3 is the result of the DO using a Gaussian distribution, and the DM also using a Gaussian distribution, but one with a higher mean and a higher variance. It looks strikingly similar to the observations of Tverskey and Kahneman (1992).

The inverse-S shape arises whenever a DM (cautiously) assumes a larger range of plausible outcomes than the DO. This happens whenever the DM has additional sources of uncertainty — did he understand the experiment? Does he trust the DO? Taleb (2019) calls the assumption by the DO that the DM will use probabilities as specified in an experiment the “ludic fallacy:” what may seem sensible to the designer of a game-like experiment may seem less so to a test subject.

3 Conclusion

Ergodicity economics carefully considers the situation of the DM as living along a time line. Probability weighting then appears not as a cognitive bias but as an aspect of sensible behavior across time. Unlike the vague postulate of a bias, it can make specific predictions: it’s often sensible for the DM to assume a larger variance than the DO, but not always. Also, a DO may be aware of the true situation of the DM, and both may use the same model, in which case there won’t be a systematic effect. In other words, the ergodicity-economics conceptualization adds clarity to ongoing research.

Ergodicity economics urges us to consider how real actors have to operate within time, not across an ensemble where probabilities make no reference to time. The precautionary principle is one consequence (because fluctuations are harmful over time); having to estimate probabilities from time series is another. Assuming a perfectly informed, perfectly rational DM, ergodicity economics predicts the observations that in behavioral economics are usually presented as a misperception of the world. Ergodicity economics thus suggests once again that economics jumps to psychological explanations too soon and without need.

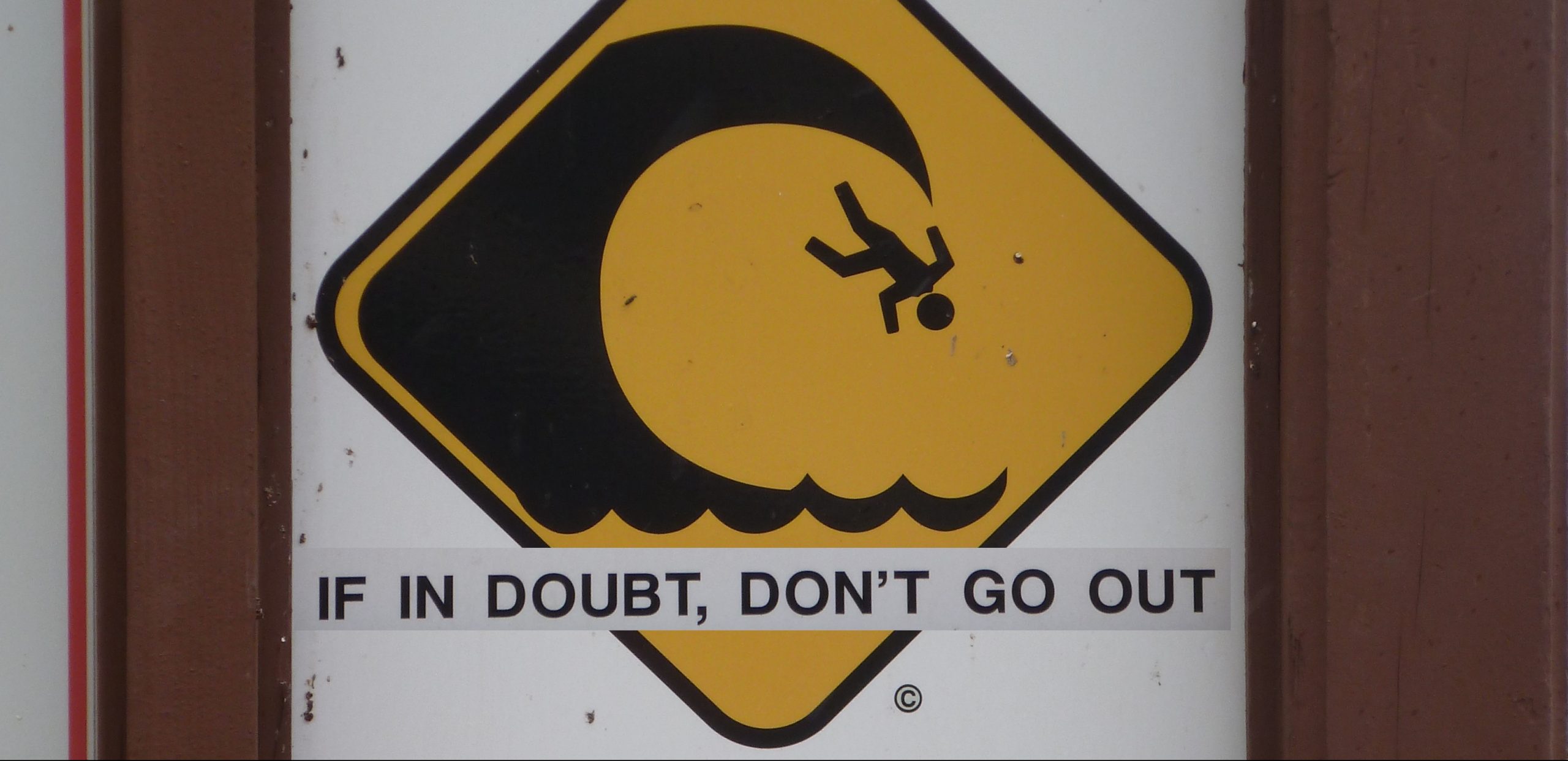

What are we to make of probability weighting, then? Just like in the case of utility, I don’t recommend using the concept at all. “Probability weighting” is an extremely complicated way of expressing a rule known by all living things. A bit of surfing wisdom: if in doubt, don’t go out.

p.s. Alex Adamou, Mark Kirstein, Yonatan Berman and I have put up a draft manuscript, which you’re invited to comment on: https://researchers.one/articles/20.04.00012.

_____________________________________________________________________________________________________

Curious about Ergodicity Economics?

Join our mailing list.

Seriously curious?

Get the textbook.

Pingback: Ergodicity Econmics as alternative to Behavioural econ. – 1500-Pound Goat

Really interesting – thanks.

Reading this and looking at the right (under-weighting high probability events) side of figure 1 made me think of the common adage, applied to technology, social/political change, etc. “we overestimate what can be accomplished in the short term and underestimate what can be accomplished in the long term.”

This post challenges me. It seems easier than other posts. It’s closer to something that I could eventually understand.

Yet, I wish there was a skill tree available, minus any unnecessary math, to understand the basics of probability starting with basic statistics and going to ergodicity. I wish there was a simplified model of ergodicity; something for a practitioner that better explained all these concepts.

I know I haven’t mastered the probability prerequisites. Yet, I feel even if I had gotten to the point of diminishing marginal returns with the prerequisites that I might still not understand this post.

I would like to better understand this stuff without knowing all the math.

If you want to get rid of the math, what remains is: the outside observer wants to be right, the decision maker wants to survive (or, in Taleb’s “skin in the game” framework: decision makers who didn’t prioritize survival can no longer be found in the population since they didn’t survive).

I just wouldn’t let the DO get away with defining “right” or “rational” as a behavior that gets you killed. That’s really not how language should be treated.

Without the math: Darwin said something similar.

I wish there was a more simplified version of this information available. Preferably, a simplified version with a skill tree, learn this concept first, then this, and this is how all of this stuff impacts your life.

I don’t find this information simple.

I want to find the bridge between the probability education in Nate Silver’s and Tetlock’s books and the idea of ergodicity.

“Ergodicity economics thus suggests once again that economics jumps to psychological explanations too soon and without need.”

“A bit of surfing wisdom: if in doubt, don’t go out.”

If someone chooses to go out, are they irrational? Is their decision psychological? If you never hear of them, and if you unconsciously select test subjects that are like you, will you completely miss the psychological nature of most risk decisions?

Also, in finance, you can hedge uncertainties. You can tie your time-series to the ensemble average. Thus the average goes up, potentially raising everyone, if they all tie themselves to an ensemble average dragged upwards by the good luck of a few out-performers.

Thus the policy solution is to tie individual time series averages to ensemble averages, using basic income say. The financial solution to the ergodicity problem is Exchange Traded Products: you can freely buy shares in an index of the ensemble average.

To your final point, what happens when everyone is tied to the ensemble average? Who is left to define the movement of the ensemble (index and its constituents)?

Great post, Ole, looking forward to the formal writeup. I have to admit, however, I became suspicious as soon as I encountered the idea of “Temporal frequentist probability”. The later mention of Taleb’s ‘ludic fallacy’ gives a great context as to why: real life is not a casino. Any situation that behavioural economics (allegedly) has something to say about has no meaningful base rate.

Granted, you raise a good point as to what probabilities even physically mean that requires we at least attempt to define them. So I wonder what you make of the idea that they “mean”: the odds of a deemed fair bet? I realise this shifts the problem into an entirely different domain as this clearly only has any meaning in terms of how people think and are willing to behave. It’s even trickier given almost nobody articulates the reasons for relevant actions in this way, so you couldn’t do it based on any kind of self-reporting. But maybe the domain shift merits something along the lines of the Copenhagen experiment? Apply the EE math in the context of ‘revealed preferences’ of buyers of insurance, from real life data or in a controlled environment?

I realised as I was typing this comment that, actually, this might invite even more relevant nuance: surely the revealed odds of a deemed fair bet will vary based on how much you are required to wager? You can punt £10 on the football, but you probably wouldn’t remortgage your house on the same gamble. Similarly, everybody buys home insurance, but there is probably a much higher incidence of ‘ruinous accidents’ to some or other low budget item (I seem to be breaking a lot of plates lately) that nobody cares enough about to insure against. This seems to invite a ‘change in wealth dynamic’ as a helpful analytical tool, and hence something EE can explain that BE cannot.

I’d point to Robin Hanson, Alex Tabarrok, and Harry Crane as starting points to develop this thinking, but I would also caution that this set is drawn exclusively from those I happen to follow on Twitter! I’m sure other commenters have a deeper knowledge of the relevant literature …

You only get to drown once.

Trump got to be President after four bankruptcies …

Pingback: Rationalitet – Long vol, short prediction models

Tangential question — you’re working with PDFs.. are there any questions that can be addressed by interpolating germane PDFs? İ can’t find examples of economists, or anyone really beyond high energy physicists, interpolating probability density functions but we’ve the tools necessary to interpolate single or n-dimensional PDFs in the open source.

Trees do not grow to the sky.

Pingback: Ergodicity economics and rank-dependent utility – John Quiggin

Is there any chance that Ergodicity of an economical system has to do with information entropy or is it a silly idea?

What i mean is, would preferences get more ergodic as we have more information about the good x and preferences get less ergodic as we have less information about good x.

I don’t understand what “In this null model, the uncertainty in a count goes as \sqrt{n}.” means. Is this the standard error of some estimator?

Pingback: Three Precious Lessons About craiglistforsex That you’re going to Never forget – Naija Artisan Hub